Codeless Automation

Applause Codeless Automation is a new offering that enables anyone to create automated software tests without writing a line of code.

👇Scroll down to learn more.

The Problem

Automated testing saves time and money, but it requires people to write test scripts. This means software engineers must write tests as they develop features, or QA teams need dedicated resources with the skills to write tests.

In talking with customers, it became clear that QA managers prefer automated testing when possible (vs having people test manually), but they rarely have the resources or time to create tests at the same pace that engineers are releasing features.

Codeless Automation was conceived to solve this problem, as an in-browser tool to make it so anybody - regardless of coding ability - could create automated tests of mobile and web software.

Beginnings

Codeless Automation was initially built as a simple proof of concept by Jon, our engineering lead.

When the business decided to build it out as a fully-fledged product, I was brought on board and began to conduct research and sketch out wireframes.

Cathy (our product lead) and I convened a group of internal users. With a mix of manual and automated QA experience, they reflected the target user personas we were building for.

We met consistently with this internal user group to ask questions, test wireframes, and validate product decisions. Their feedback was invaluable as we iterated on design ideas.

Creating a Test

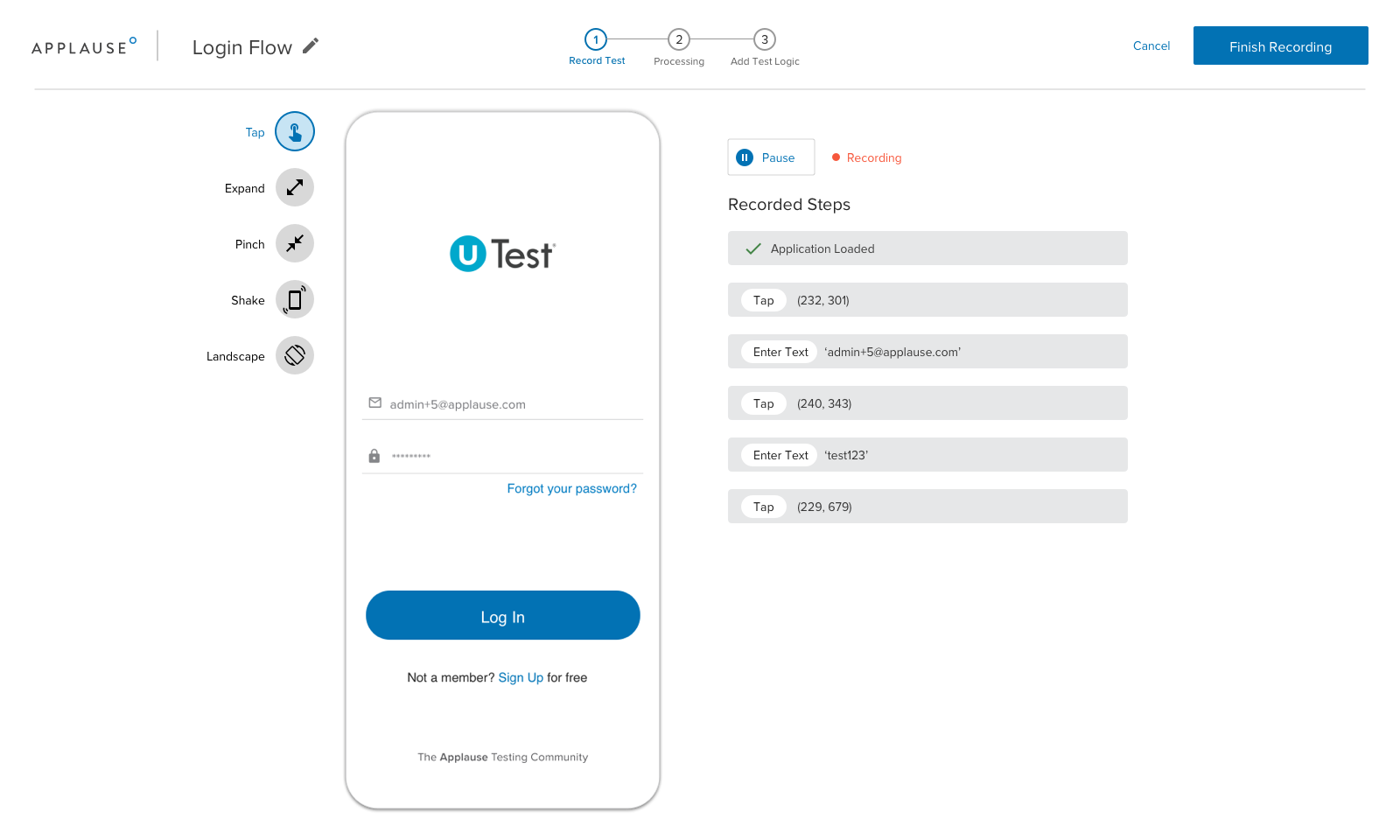

We agreed early on that Creating a Test was the core of the product, and it was particularly important to make this flow as clear and simple as possible.

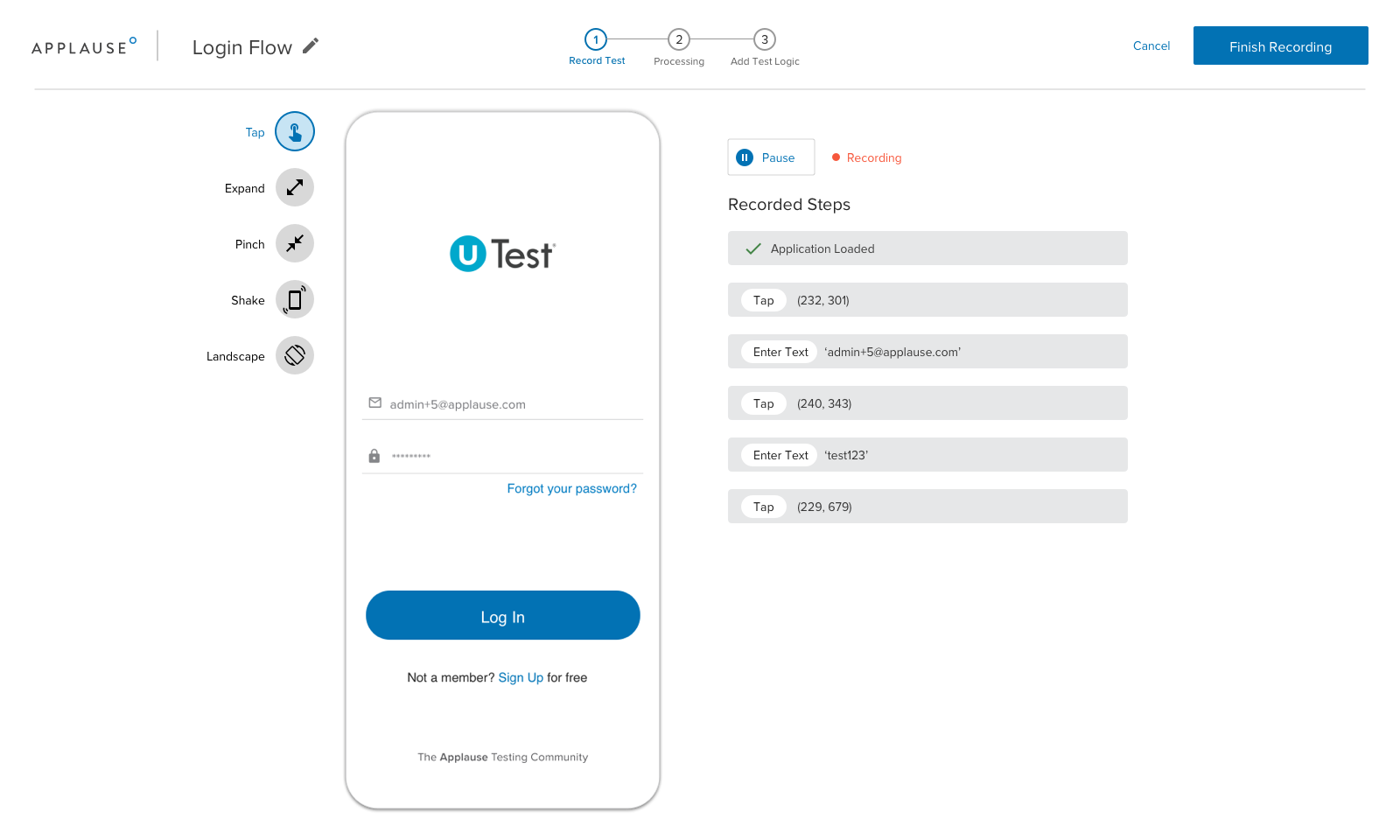

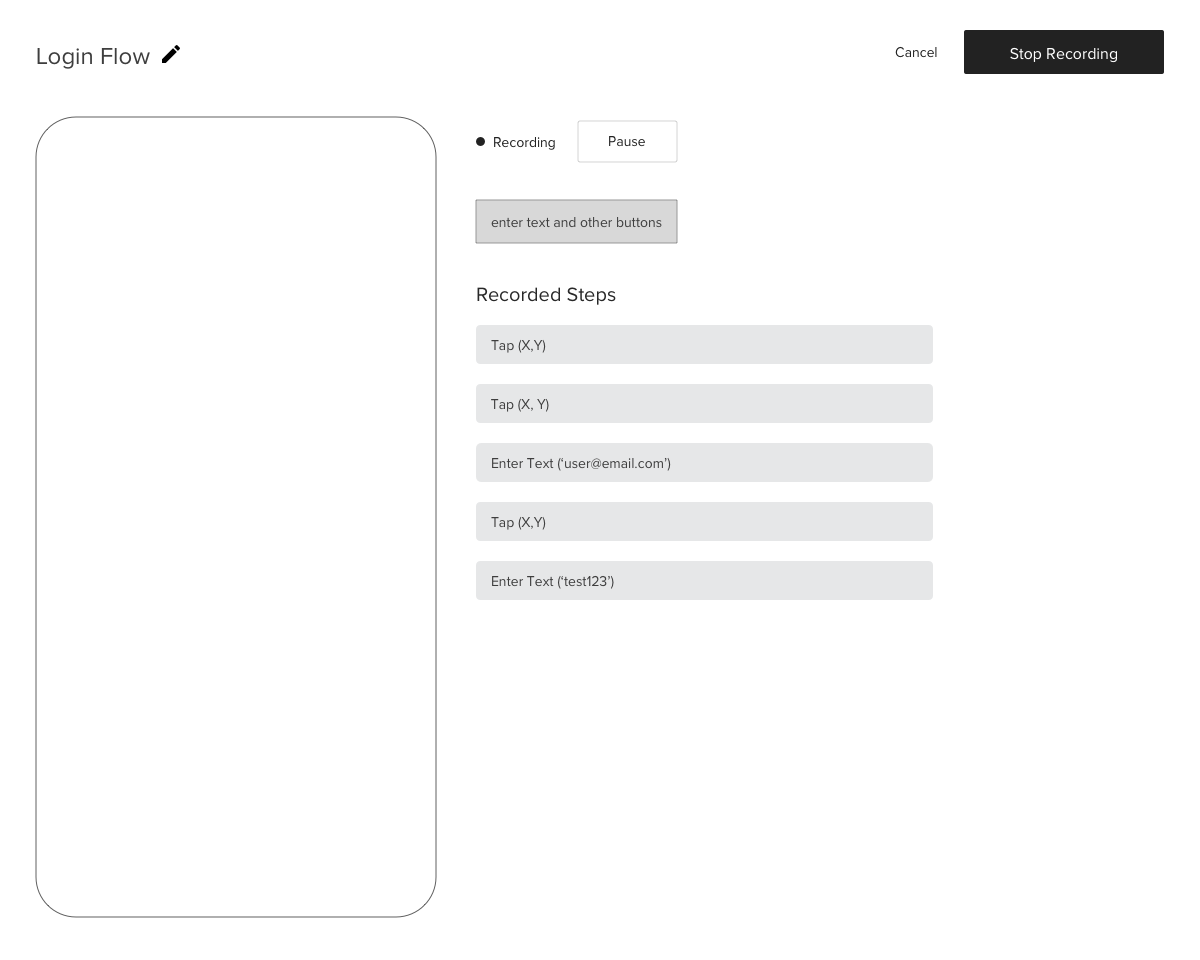

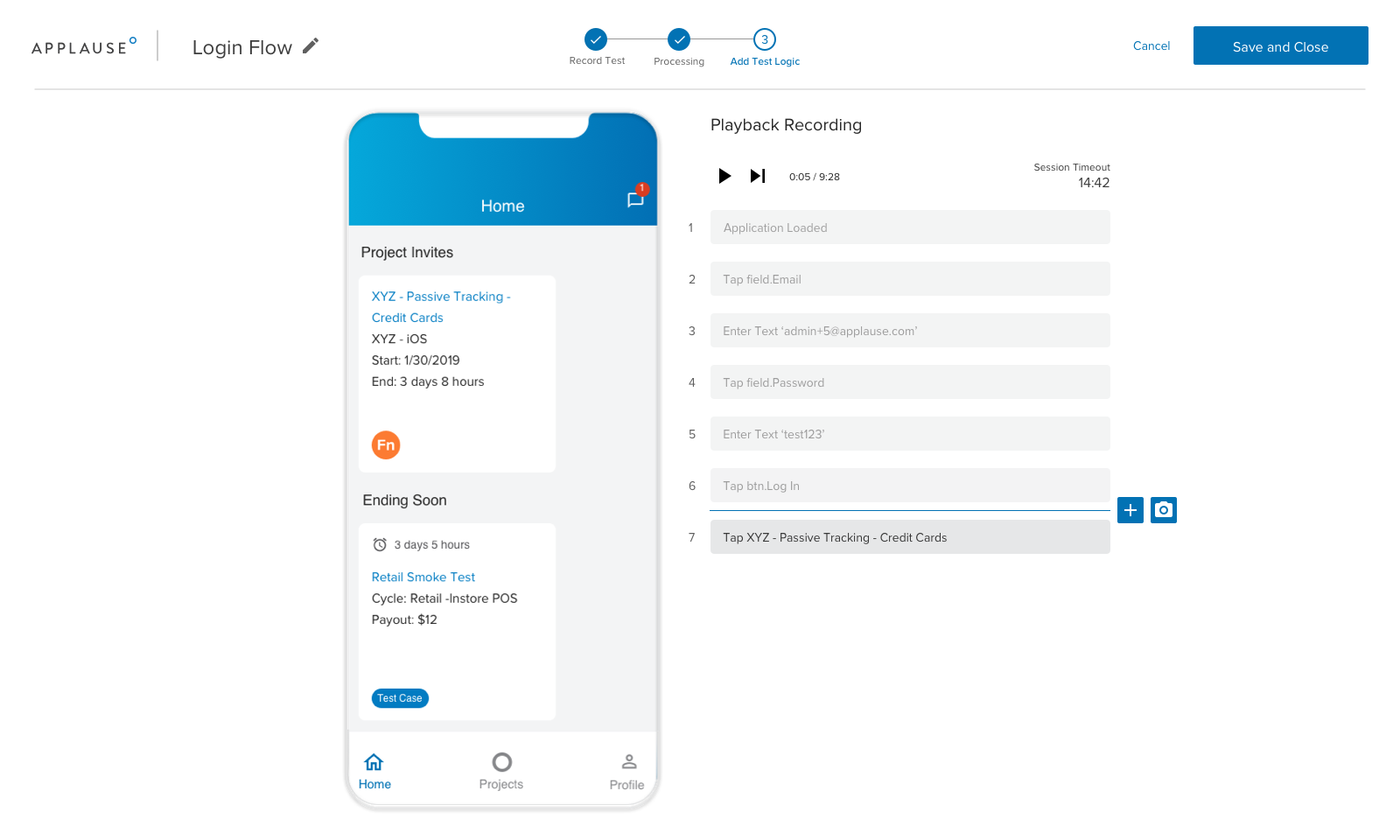

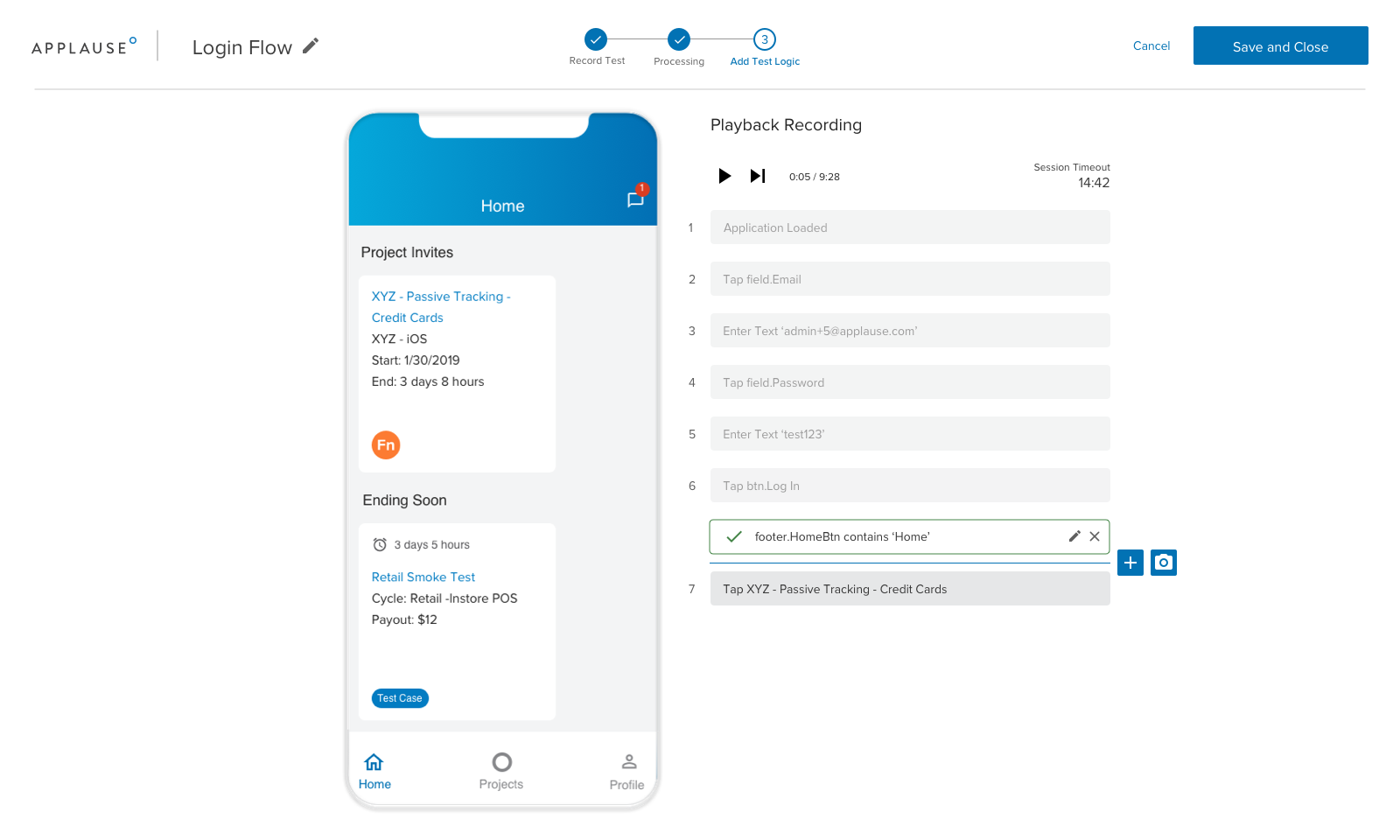

Creating a test consists of two distinct phases. Let's say you want to test the login flow of an app. First, using the in-browser device simulator, you login the same way you would on a real mobile device.

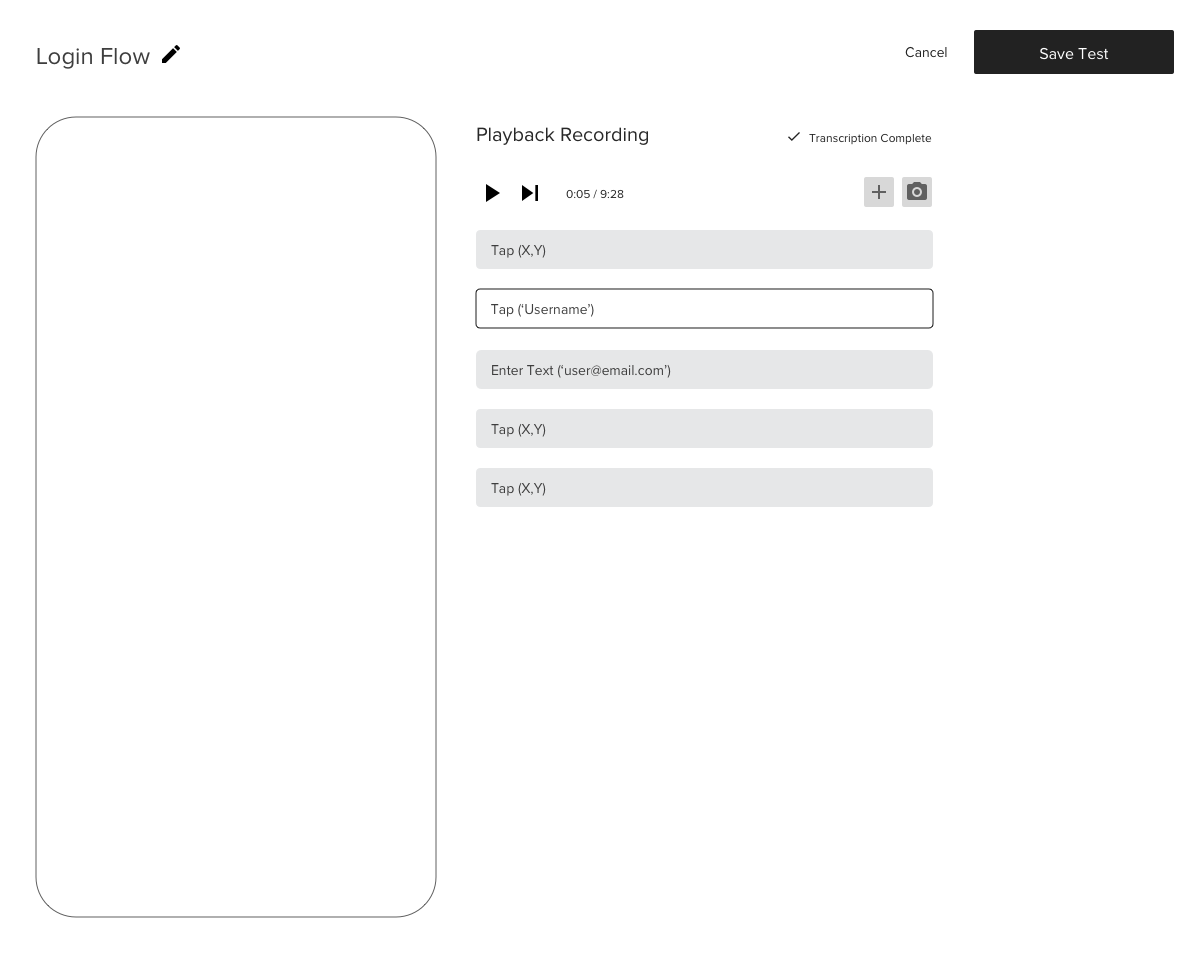

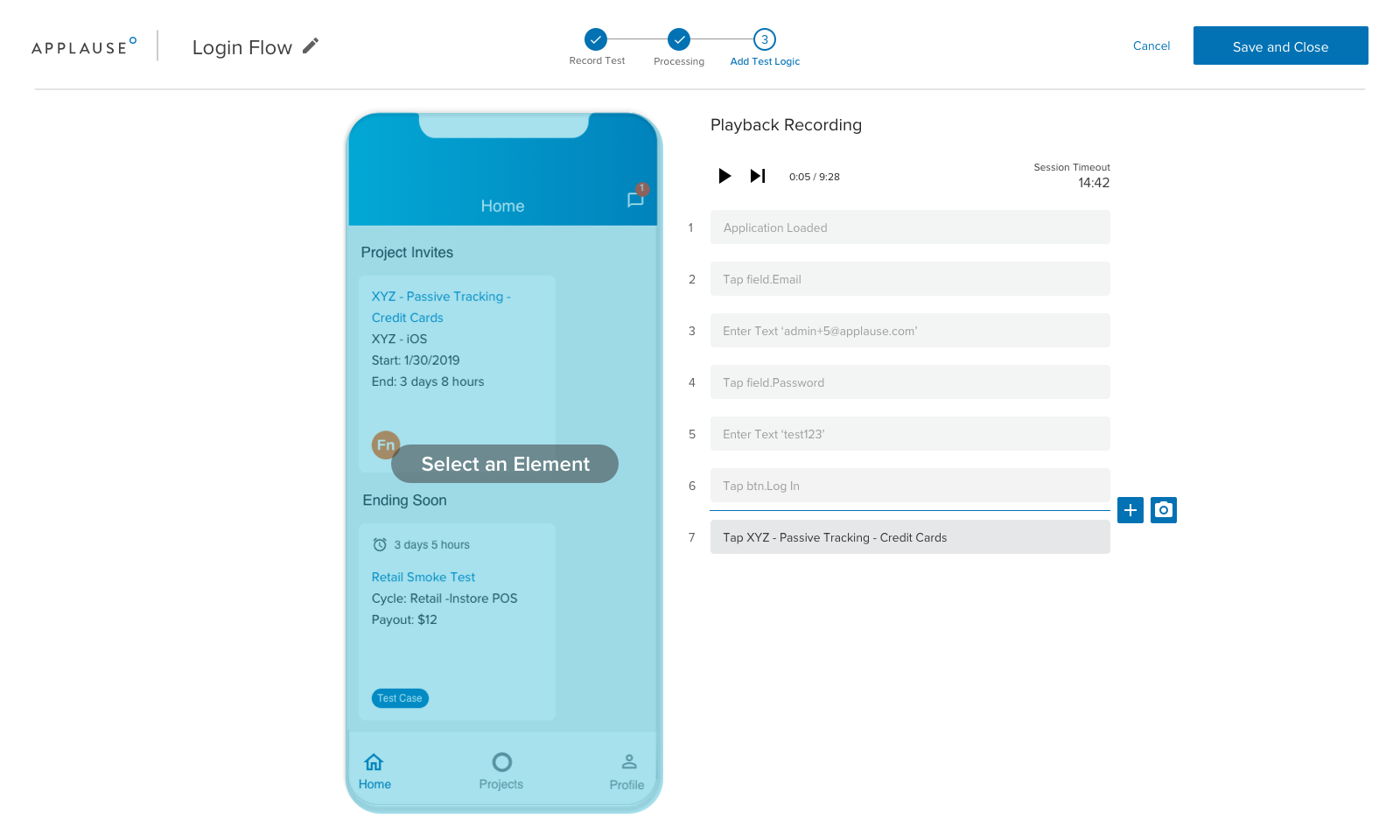

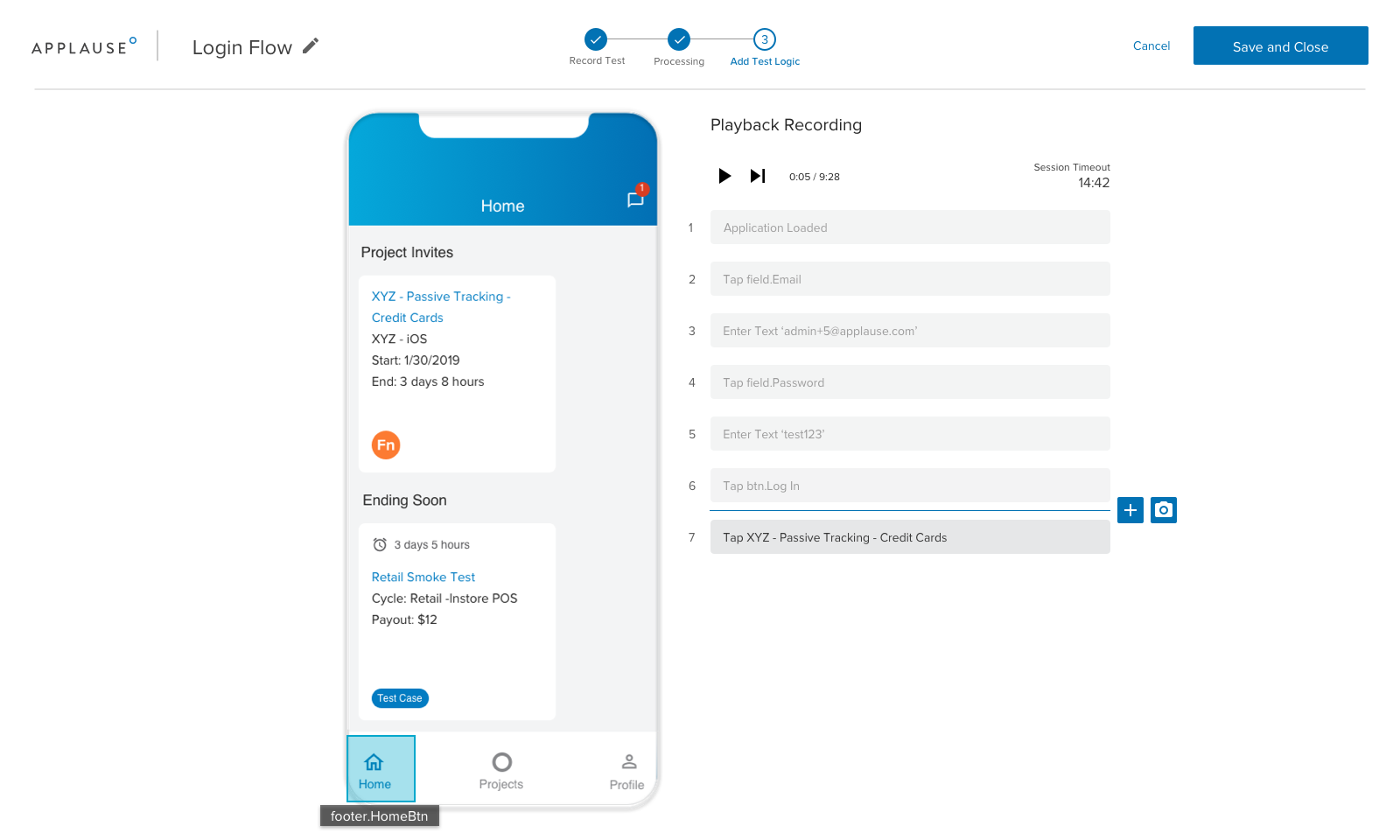

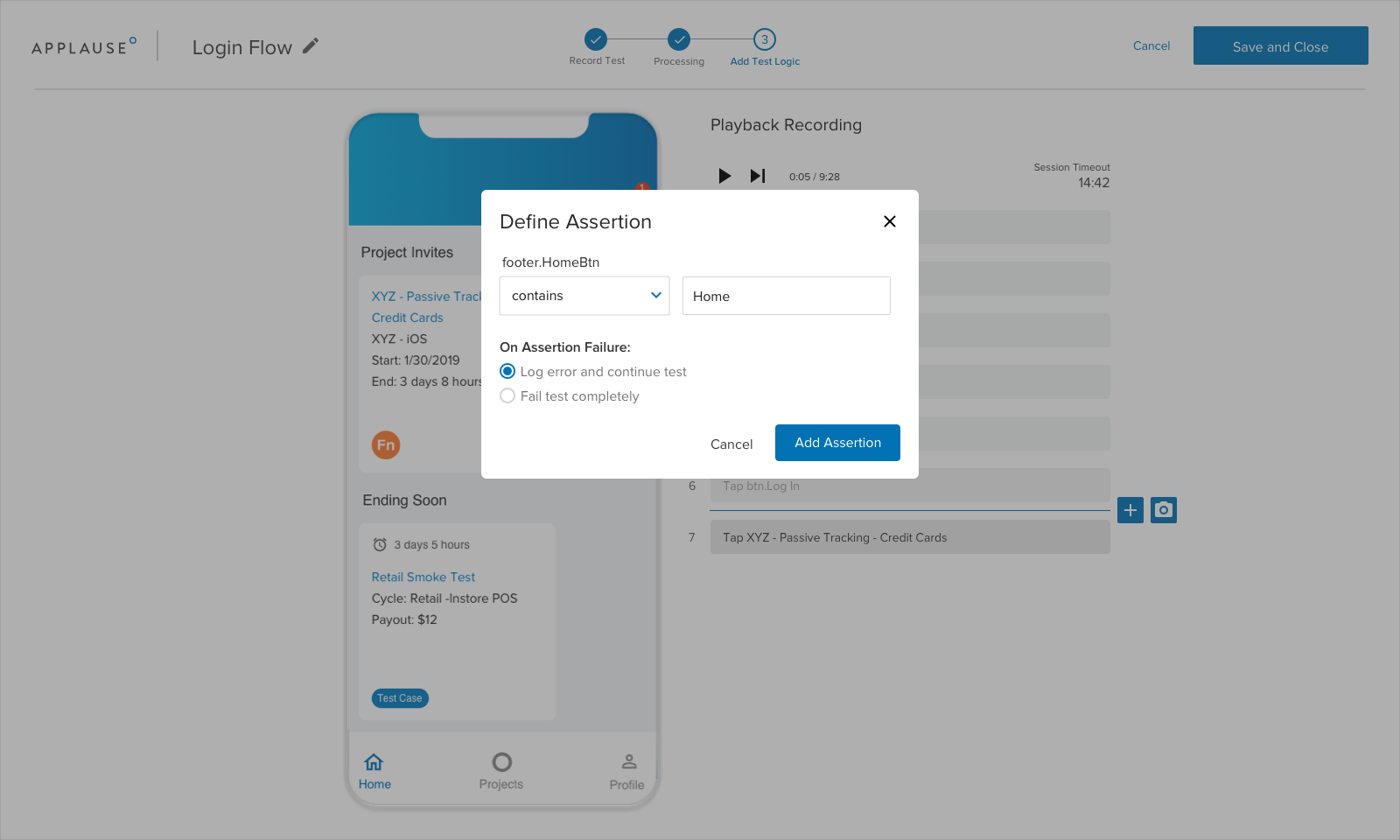

Next, you need to tell the computer how to recognize that you've successfully logged in. This is done using something called assertions, which are bits of logic tied to elements on the screen.

In this case, you would add an assertion to verify that the text 'Home' appears at the end of the test. If the computer re-runs the test and doesn't find the element containing 'Home', the test will fail.

We went through a number of iterations of this flow, using regular feedback from the internal user group to guide our product and design decisions. Improvements that came out of these sessions included adding the progress indicator at the top, moving the action buttons to the left of the recording screen, and dynamically keeping the (+) assertion button next to the current step rather than above the list of steps.

Early Wireframes

Final Mockups

Recording a test

Adding assertions

Organizing and Running Tests

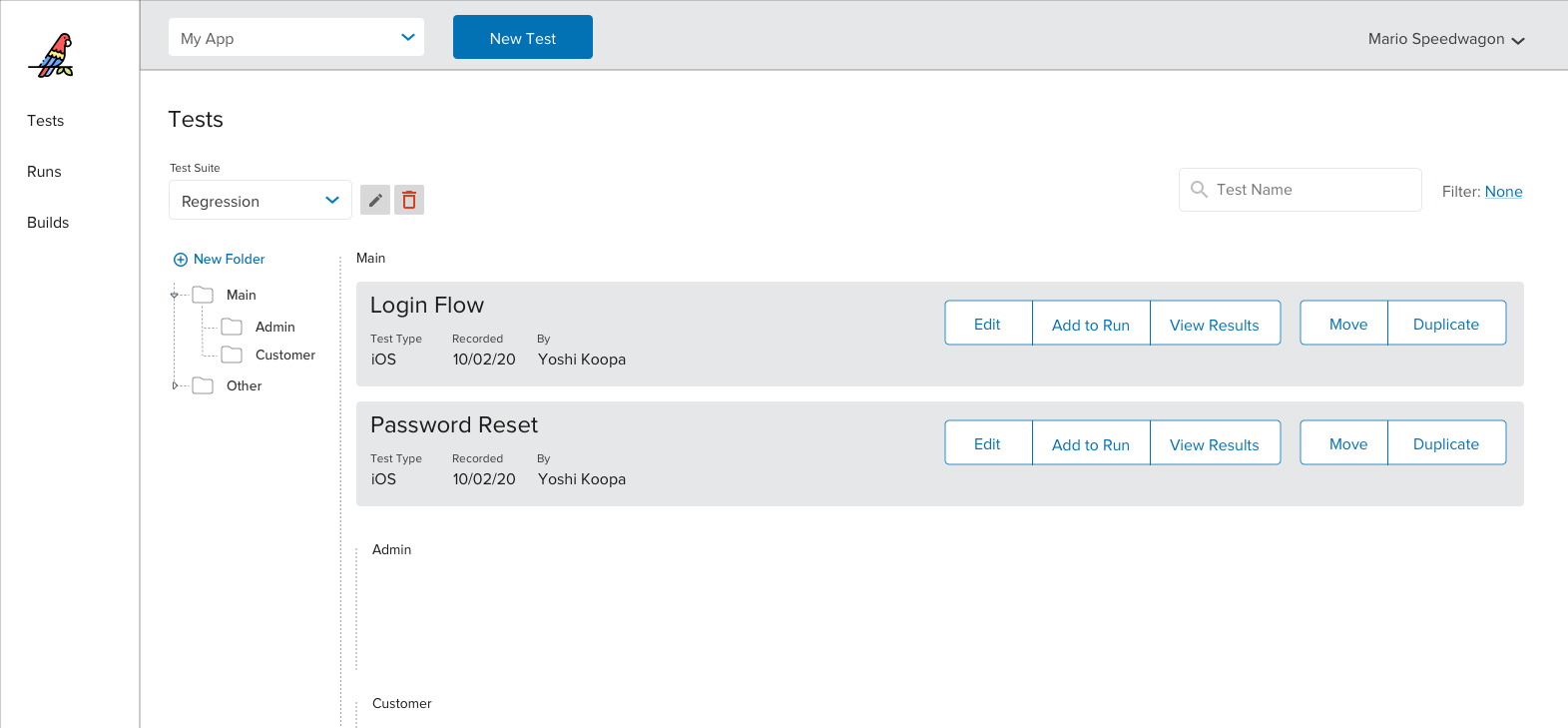

Once tests are recorded, it's crucial to be able to organize them across Test Suites. A Test Suite is a bucket of tests with a shared purpose.

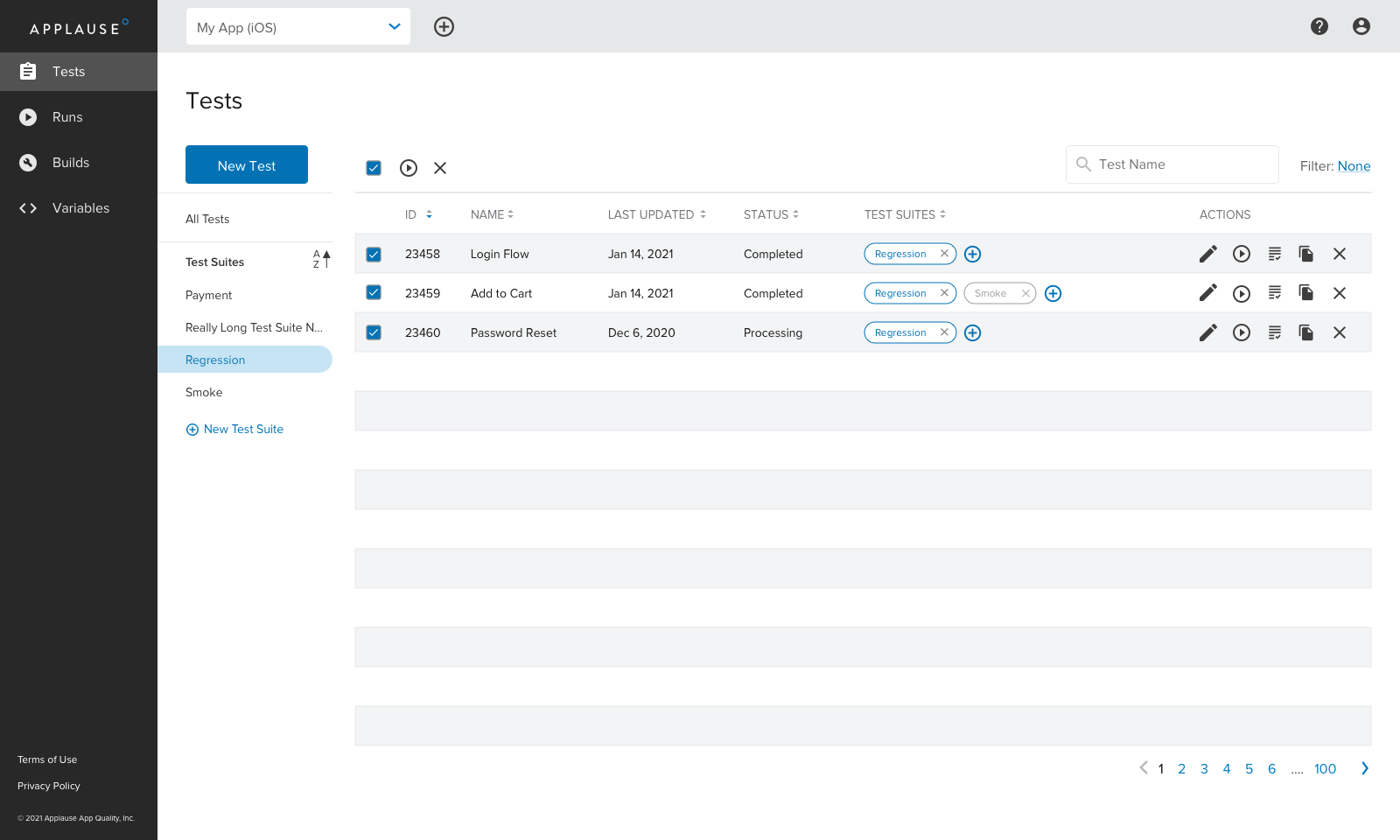

We initially explored using a folder structure for this, but it became clear that our users wanted to easily assign the same test to multiple suites. This led to using a tag approach instead.

My first approach was to use a list of cards for tests. Over time, we realized that using a table instead would allow for more flexibility in sorting and filtering across large quantities of tests.

Early mockup

Final mockup

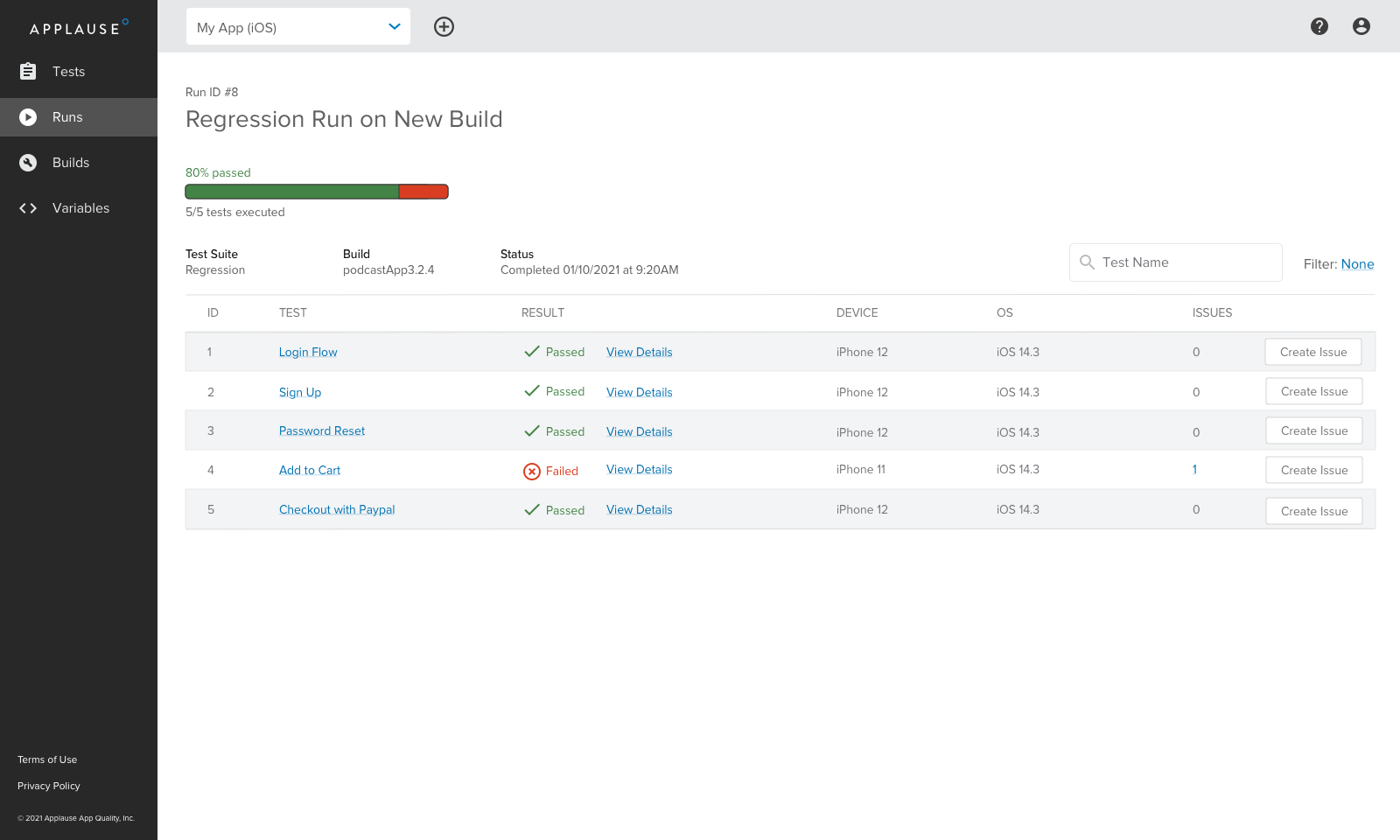

Running tests

Running tests was the final piece of the core experience that we needed for launch. Once a test is recorded, it can be executed automatically when the user uploads new versions of their app. I used some design patterns for test results that I had already established in separate projects. These had already been validated with users and had the benefit of maintaining consistency between Codeless Automation (a standalone tool) and our primary customer platform.

Success Metrics

There are several metrics we'll use to measure success from a UX and product perspective. The first, and perhaps most important, is ensuring that any user can successfully create and run their first test. Much like Facebook's famous "7 friends in 10 days", I believe that if we do this successfully, our users will come back again and again to use our product.

We'll also track overall product usage and engagement. More generally, as the product rolls out in beta and public launch, we'll run targeted usability sessions to validate decisions and find areas for improvement.